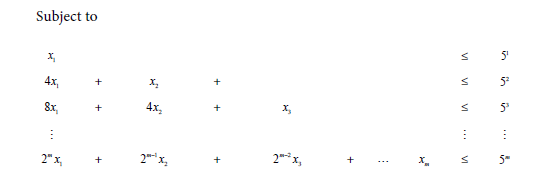

The techniques could potentially be applied to analyze other learning-based algorithms in the literature. The numerical algorithms (FBS and ADMM) are described in Section 3 and Section 4, respectively, each with convergence analysis. We detail the proximal oper-ator in Section 2. The rest of the paper is organized as follows. While AG methods perform well for convex penalties, such as the LASSO, convergence issues may arise. Lipschitz continuous gradient and the other has an analytical proximal mapping or the mapping can be computed easily. Nesterov's accelerated gradient (AG) is a popular technique to optimize objective functions comprising two components: a convex loss and a penalty function. This paper contains novel theoretical contributions to the area of learning-based algorithms in the sense that (i) PLISA is generically applicable to a broad class of sparse estimation problems, (ii) generalization analysis has received less attention so far, and (iii) our analysis makes novel connections between the generalization ability and algorithmic properties such as stability and convergence of the unrolled algorithm, which leads to a tighter bound that can explain the empirical observations. Accelerated Gradient Methods for Sparse Statistical Learning with Nonconvex Penalties.

Furthermore, we analyze the empirical Rademacher complexity of PLISA to characterize its generalization ability to solve new problems outside the training set. We theoretically show the improved recovery accuracy achievable by PLISA. Furthermore, non-convex algorithms for 2D sparse recovery are also presented, such as iterative proximal-projection approach 17, L + S model. PLISA is designed by unrolling a classic path-following algorithm for sparse recovery, with some components being more flexible and learnable. 18, 279 Nobel Prize, 7 noiseless sparse recovery problem, 8 noisy graph. In this work, we propose a deep learning method for algorithm learning called PLISA (Provable Learning-based Iterative Sparse recovery Algorithm). gradient descent method, 159 projective variety, 387 proximal gradient. L-BFGS algorithm source code This code is a sparse coding to optimize weights and weights has been updated, the optimization cost function Step 2: Get the. Besides, hand-designed algorithms do not fully exploit the particular problem distribution of interest. In the realm of deterministic optimization, the sequence generated by iterative algorithms (such as proximal gradient descent) exhibit finite activity. Many classic algorithms can solve this problem with theoretical guarantees, but their performances rely on choosing the correct hyperparameters. Abstract: Recovering sparse parameters from observational data is a fundamental problem in machine learning with wide applications. Here, we propose a new framework, called Proximal-Gen, for CS recovery.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed